Reflections on The AI Doc: Or How I Became an Apocaloptimist

Why the Biggest Challenges of AI Technology are Human.

“This is the last mistake we’ll ever get to make” — Aza Raskin

This week, we curated a diverse audience of over 150 experts—ranging from academics, journalists, and tech—to join us for a special screening of The AI Doc: Or How I Became an Apocaloptimist (which is now out in theaters!). We also included several newsletter subscribers who joined us in person.

After the screening, Anni hosted a panel discussion with Jay, the author of The Anxious Generation Jonathan Haidt, and Academy Award-winning producer Jonathan Wang. The room was buzzing afterwards and we decided to share a recap of the discussion to share with the rest of the world

While much of today’s conversation focuses on how AI (Artificial Intelligence) will shape the future. We took a step back and asked a different question: How can we guide AI toward a future we want, and how do we keep that future human?

“The rare feeling of being in a room assembled with real purpose, where the aim was not simply to generate more noise around AI, but to think more carefully about what this moment demands of us.” — Event attendee, Paul Gimenez

Our Key Take-aways:

1) Watch the documentary, but don’t watch it alone

Is it a super accessible primer on the promise and perils of AI that your family should see? Yes. — Event attendee

We have been studying the psychology of technology for over a decade, but the documentary rattled many of us in a new way. Watching together, we were on a roller coaster of despair, excitement, and hope. Jay said his first reaction was existential terror. His second reaction was hope—especially when people spoke about collective action.

This is a huge problem that will require a lot of collective action (…) hearing everyone react to it made it feel like we were all (…) reaching a common ground. — Event attendee

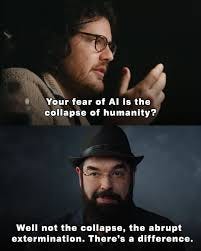

2) AI will supercharge the good and the bad

AI can potentially help us solve climate change, find a cure for cancer, and offer children around the globe access to individualized, high-quality tutoring. Yet, the same powerful technology can advance bioweapons, feed children sexualized content, and erode our shared understanding of truth. The two outcomes are intrinsically intertwined.

Jay and Jonathan Haidt have written a lot about how tech companies are incentivized to maximize attention because this keeps users on their platforms and engage more. But this can have a number of downstream consequences, including: polarization, distorted norms, and declining adolescent mental health. Meta just lost two trials this week: A $370 million lawsuit over child sexploitation and another $4.2 million for creating addictive design features that harm users.

I expect it will become essential viewing for anyone trying to think seriously about artificial intelligence and its human consequences.— Event attendee

AI may perpetuate this problem: In a set of studies, led by Steven Rathje, Jay finds that people enjoy interacting more with sycophantic chatbots (chatbots that validate beliefs indiscriminately) vs. more balanced chatbots which led to more extreme political beliefs. And a new study came out this week showing that AI chatbots are 50% more sycophantic than humans. The authors concluded “that seemingly innocuous design and engineering choices can result in consequential harms”. This is a recipe for disaster.

3) Misaligned incentives and the psychology of AI arms races

The documentary explains that there is no good AI vs. bad AI. The promise and peril are inextricably linked and it is therefore the underlying incentive structures that we have to understand and guide. And these incentives are currently steering us in the wrong direction, creating a race similar to the nuclear arms race during the cold war; companies and countries are incentivized to cut corners in AI safety to avoid losing the race.

What future should we thrive for and how do we get there?

The documentary challenged us to reflect on what future we want and what it means to be human.

As we have seen with social media, it is difficult to predict the impact of new technology. Social media played a key role in democratic movements such as the Arab Spring, but it was also linked to the Rohingya genocide. We need to learn from that and design the technology to avoid these risks, rather than dealing with the damage to individuals and society in hindsight.

Jonathan Haidt urged us to create resilient societies with a strong immune system to buffer unpredicted stressors. Societies that have institutions that can react fast and civilians that have moral maturity and wisdom. This is a tall order for our current political leadership so the burden will fall on each of us to advocate for better solutions.

Psychological research can help us get there. For instance, the study by Jay and Steve also found that engaging with sycophantic chatbots did not increase belief extremity among participants who scored high in intellectual humility—these individuals were more receptive to chatbots who gave them critical feedback and a well rounded view of an issue.

The documentary producer, Jonathan Wang, spoke about the need to focus on human beauty and creativity. In a world that gets more and more complex and noisy from the abundance of content, people are craving meaning, beauty, and genuine social connections—uniquely human experiences. Human-made art and story telling rather than synthetic and hyperpersonalized content may be one way to get there.

4) The psychology of collective action

Changing incentives and institutionalizing alternatives requires coordination and collaboration at an unprecedented scale. As the world is entering an era of democratic instability, intergroup conflict, geopolitical isolationism, and distrust this may seem impossible. But psychological research and practical experience can help us come together.

We have studied intergroup conflict for several decades and found that a shared sense of identity and superordinate goals can encourage people to collaborate instead of compete. Research also finds that changing social norms may be a powerful tool to mitigate selfish behavior. For instance, a study conducted in Finland found that Finish students were less susceptible to selfish behavior, likely because Finland scores high on social norms of cooperation.

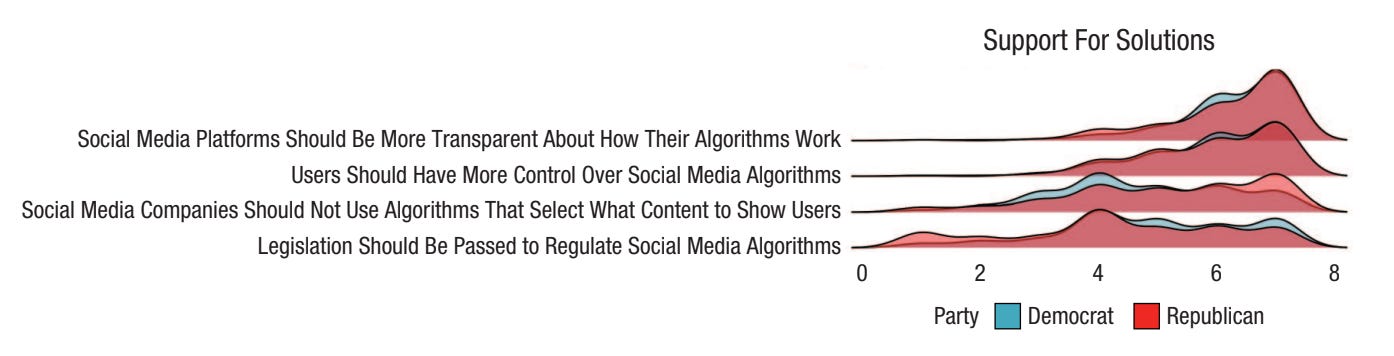

One of the biggest contribution of the movie is that it gives us a common language and knowledge around AI and how it affects us personally and society at large. Common knowledge is essential for breaking pluralistic ignorance and coordinating action. Jay noted that our own research finds that the overwhelming majority of people want transparent algorithms and they want control over these algorithms (see figure below).

If we are largely on the same page, you may ask how we can possibly rally people? The PhD student in our lab—Danielle Goldwert—is studying this question for her dissertation. She finds that a feeling of collective efficacy is critical to mobilize people. This sentiment is echoed in historic events like the civil rights movement:

“Never doubt that a small group of thoughtful, committed citizens can change the world; indeed, it’s the only thing that ever has.” — Margaret Mead

Agency and hope

Claiming our agency was one of the biggest themes of the movie and the panel discussion. Hopelessness is the enemy of change. Indeed, one study finds that sense of agency predicted collective action, but only among groups that also scored high in hope.

How do we find hope?

A recent poll finds that 80% of U.S. adults believe the government should maintain rules for AI safety and data security, even if it means developing AI capabilities more slowly. Recently, the U.S. Congress voted 99-to-1 to strike down a bill proposal that would limit state-level regulation of AI In such a deeply polarized country this should give us hope. We can agree on things, we just need to find the same language and collaboration to get things done.

Our panelist Jonathan Haidt said that it is the most exciting time to be a social psychologist. We couldn’t agree more. Our field has all the tools to study these issues. Understanding these dynamics may prove very helpful for taking action. You can build your own understanding by watching the movie, talking about it with your friends and family, and becoming a part of the conversation.

The documentary will be released nationwide in theaters March 27. Catch it in your local theater and find out where you are on the Apocaloptimist Scale.

We thank the NYU Arts and Science Office of Research and Focus Features for their partnership in making this event possible.

Notes of the week

This screening was the first in a broader series of events we’ll be opening up to newsletter subscribers, including online courses for premium subscribers led by Jay and Dom, so keep your eyes peeled for more to come.

In the meantime, Jay was interviewed on NPR's Hidden Brain about the power of social identity. We often think of beliefs and decisions as individual choices. But in many cases, they are shaped by our social identities. When people join groups, they don’t just coordinate behavior—they begin to see the world through a shared lens.

This has profound implications for: leadership & conformity, organizational culture, intergroup polarization, and collective action. The themes we discussed in this very newsletter about AI. But social identity also helps explain why changing minds can be so difficult: you’re often engaging with identity, not just information.

If you’re interested, you can listen to the full discussion here.

What concerns me most is not just the capability of these systems, but our capacity to meet them with discipline.

Our response time is slow.

Our will is fragmented.

And our impulse? Elite-tier.

We didn’t exactly ace the “handle social media responsibly” test. In fact, we haven’t come close to fixing that (yet).

This next exam is even more consequential.

Our collective impulse—unchecked—has repeatedly shaped outcomes before we fully understood the stakes. That combination deserves far more scrutiny than it’s currently receiving.

I grew up in the 60s and 70s. Personal computers didn't become a thing until I was in my tweens.

I remember experimenting with Eliza & derivative chatbots. Whewn I use AI today, I know there is nothing real there, but I realize people today who grew up with the internet don't recognize the artificiality of it.

I think there needs to be more education regarding the philosophy and ethics of the internet.