How social media is harming society

We describe the recent lawsuits against social media companies and how the harm extends beyond kids to the rest of society

Over the past few weeks, attitudes toward social media have shifted dramatically. A pair of landmark court rulings last month ruled that social media platforms like Facebook and Instagram used “addictive design” and harmed young users.

Last week, juries in two different states delivered multimillion-dollar verdicts against Big Tech. A New Mexico jury handed down a $375 million verdict in a case brought by the state’s attorney general against Meta for enabling child sexual exploitation. The next day, a California jury awarded a young woman a combined $6 million in damages from Meta and YouTube for the allegedly addictive and mentally distressing properties of social media apps, including algorithmic curation and so-called infinite scroll, where the app continually provides you with new content as you scroll down the page.

These verdicts have catalyzed a "tidal cultural shift" toward viewing social media companies not just as content platforms, but as manufacturers of products that require regulation, similar to classic legal cases against "Big Tobacco". In fact, this parallel is exactly how some Meta-employees felt about their attempts to target kids:

The focus of the public has shifted from the content posted on platforms and content moderation policies to the deliberate design decisions made by these platforms—such as infinite scrolling, algorithmic recommendations, and push notifications—that are engineered to maximize engagement, even at the cost of individual or collective well-being.

This is hardly news to us. We have been studying and writing about the challenges posed by social media for the past decade. In this newsletter, we have written about the Facebook papers shared by a prominent whistleblower and why schools should ban smartphones.

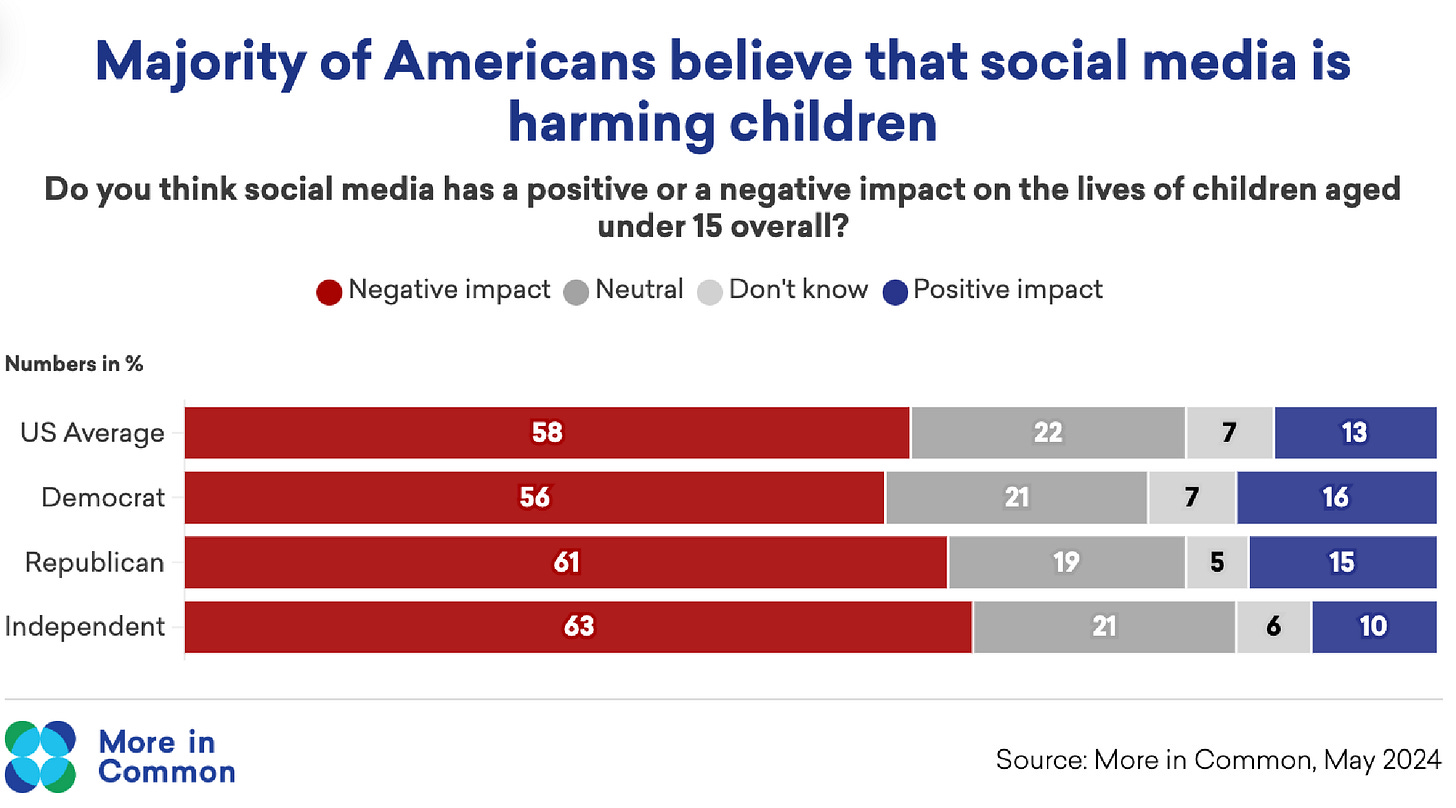

This should also come as no surprise to anyone who has been following these issues. A majority of people believe that social media is harming children—and this has now been the case for several years. It is also a rare case of bipartisan consensus, with Democrats, Republicans, and Independents all on the same page.

In many cases, the research and safety teams are deeply aware of these problems. But the senior management in the companies is often unwilling to implement changes. The pursuit of profit is a difficult temptation and they have focused on harvesting the attention of teenagers, rather than ensuring their safety. For instance, Mark Zuckerberg wrote in a 2017 internal memo: “Teen time spent [should] be our top goal of 2017”, even after safety concerns were raised by employees.

Whistleblower Frances Haugen was one of the first employees to bring these concerns to the attention of the public. Her testimony and public interviews provided a better understanding about how Facebook engineers their platform and the consequences this has for spreading misinformation and fostering social conflict.

“Facebook is optimizing for content that gets engagement or reaction. Its own research is showing the content that is hateful, that is divisive, that is polarizing—it is easier to inspire people to anger than other emotions.” — Frances Haugen, on 60 Minutes.

A few days after the whistleblower went public, Facebook’s chief executive, Mark Zuckerberg, spoke with employees about the revelations. According to the New York Times, he claimed that Haugen’s assertions about how Facebook polarizes people were “pretty easy to debunk.”

Two court cases—and numerous studies later—the data is firmly on her side.

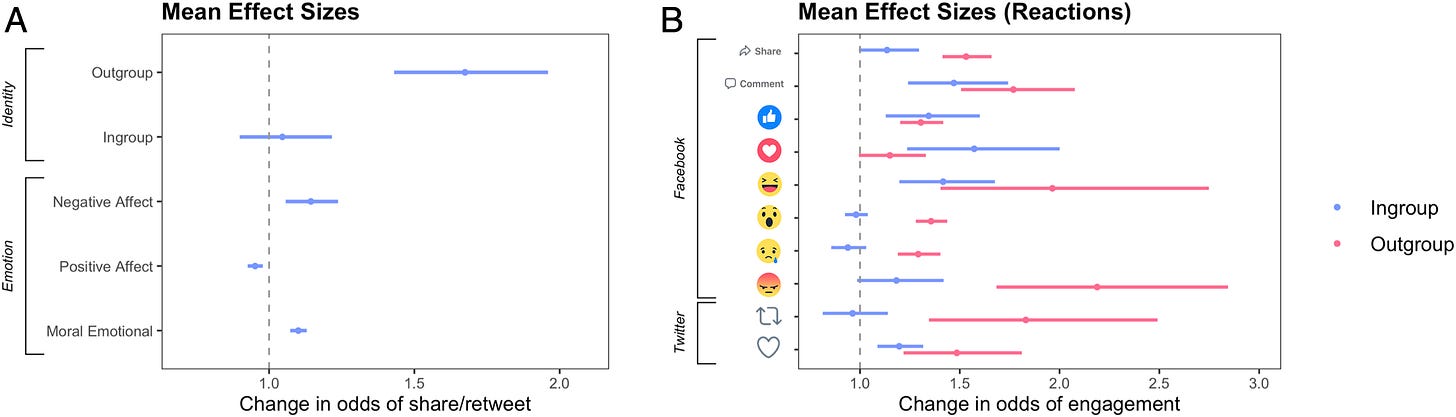

Debate about the potential harms caused by Facebook and other social media platforms is still up in the air on some issues, but findings on the spread of divisive and polarizing content (in the United States at least) seem clear. Before the recent interview with Haugen on 60 Minutes or her appearance before Congress, Jay published an analysis of nearly 3 million posts on Facebook and Twitter with his colleagues from Cambridge University, Steve Rathje and Sander van der Linden.

In our research, the single biggest predictor of social media “virality” was dunking on a political foe. Each word referring to the political out-group increased the odds of a social media post being shared by 67% (see figure below). If a post came from a Democrat, a word like “Republican” or “conservative” led to increased virality. And if a post came from a Republican, a word like “Joe Biden” led to the same. And most of these posts were clearly negative.

One example of a viral post that spread in Republican circles was from Breitbart, promoting a video entitled, “Every American needs to see Joe Biden’s latest brain freeze.” Meanwhile, a post that went viral from Democrats came from The Daily Beast, claiming that Mike Pence was blatantly lying about Covid-19.

When Steve, Sander, and Jay published a report about these data in The Washington Post, Facebook published a fierce rebuttal. They argued that “Extremism is bad for our business” and presented a limited set of studies that focused largely on the impact of Facebook in other countries.

Yet their own internal research, shared by this whistle-blower, contradicts their rebuttal. According to a disturbing new analysis of the Facebook Papers by The Atlantic, employees at Facebook have long known that the social media giant amplifies extremism, spears misinformation, and encourages political polarization. And they are growing increasingly angry with the way that company leadership has refused to incorporate these insights.

“Again and again, the Facebook Papers show staffers sounding alarms about the dangers posed by the platform—how Facebook amplifies extremism and misinformation, how it incites violence, how it encourages radicalization and political polarization. Again and again, staffers reckon with the ways in which Facebook’s decisions stoke these harms, and they plead with leadership to do more.

And again and again, staffers say, Facebook’s leaders ignore them.”

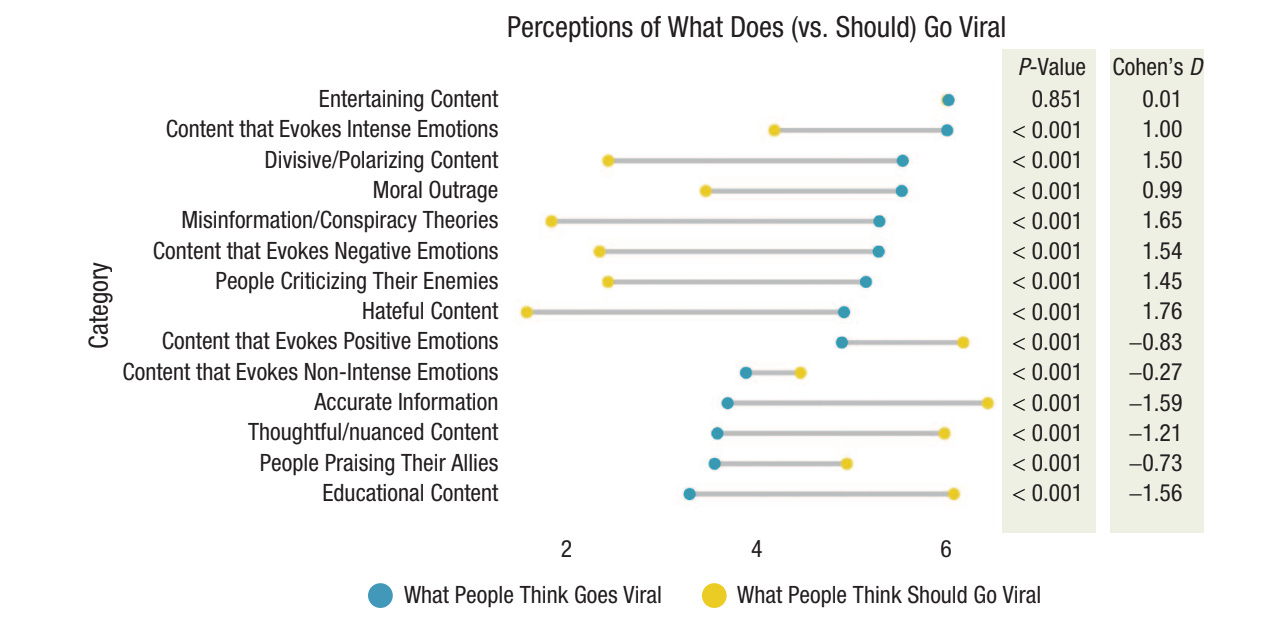

It also turns out that people don’t like this type of content. We asked a diverse sample of Americans how they feel about the content that spreads on social media. As you can see below, people believe that divisive content, moral outrage, negative content, high-arousal content, and misinformation are all likely to go viral online. However, they wish this type of content would not go viral on social media. Instead, they want to see far more positive content spreading online, such as accurate, nuanced, uplifting, and educational content.

The aversion to social media extends well beyond specific topics. About half of Gen Z wishes TikTok (47%) and X (50%) didn’t exist. That’s despite—spending four hours a day on social media, as more than half of respondents to a new survey say is normal.

Scholars have been sounding the alarm about these problems for several years. Indeed, we devoted an entire chapter to toxicity online in our book “The Power of Us“. In the book, we describe a clever experiment that offers causal evidence that Facebook increases polarization and reduces well being. A group of economists led by Hunt Allcott decided to find out what would happen if they paid people to leave Facebook for four weeks in advance of the 2018 US midterm elections. They paid more than a thousand volunteers to log off the social network for a few weeks and compared them to a control group of volunteers who continued using Facebook.

This experimental design allowed the researchers to detect the influence of using Facebook on polarization and other potential harms…or, more accurately, whether ceasing to use the platform reduces these problems.

Not only did the volunteers who logged off Facebook report an increase in their psychological well-being, but they became less politically polarized (across a variety of measures). Remarkably, the reduction in polarization from logging off Facebook for a single month was equivalent to nearly half the amount polarization has increased in the US since the mid-1990s!

In defense of Facebook, they are certainly not the only platform exacerbating social conflict by amplifying polarizing content and misinformation. We recently published a review of the research on the topic and found that Twitter/X is even more polarized than Facebook or WhatsApp in some countries. And the effects are mixed—one study in Bosnia, for example, suggests that Facebook might help expose people to different perspectives if their real life social networks are homogenous.

But when this social media technology was introduced to the Marubo tribe deep in the Amazon via access to Elon Musk’s Starlink it revealed the same basic pattern we have seen over and over again in many studies. Members of this small community quickly became obsessed with social media to the extend that the community had to shut down access to the internet each day to ensure they would hunt and gather enough food for their own survival.

More strikingly, it also spurred the kind polarization we saw in our American samples. According to the NYT “Elon Musk’s Starlink has connected an isolated tribe to the outside world — and divided it from within.” I described this natural experiment a few months ago on Andrew Yang’s podcast, which you can watch below:

In the United States, where antipathy toward out-group partisans is at a 40-year high and often seems like a sectarian conflict, social media amplifies existing disagreements, facilitates the spread of conspiracy theories, and allows for the organization of anti-democratic activities (like the January 6th insurrection). But the Amazon example also illustrates that this technology can have a massive disruptive influence even in a community without these political dynamics.

This technology poses a serious problem for public health, as well. As Biden’s Press Secretary noted in July, twelve people produced 65% of anti-vaccine misinformation on social media platforms. At the time, all of them remained active on Facebook despite some being banned on other platforms (including ones that Facebook owns!). Indeed, news exposure from Facebook was one of the single biggest predictors of vaccine hesitancy in the United States—with people who only got their news from Facebook expressing greater vaccine hesitancy than Fox News viewers.

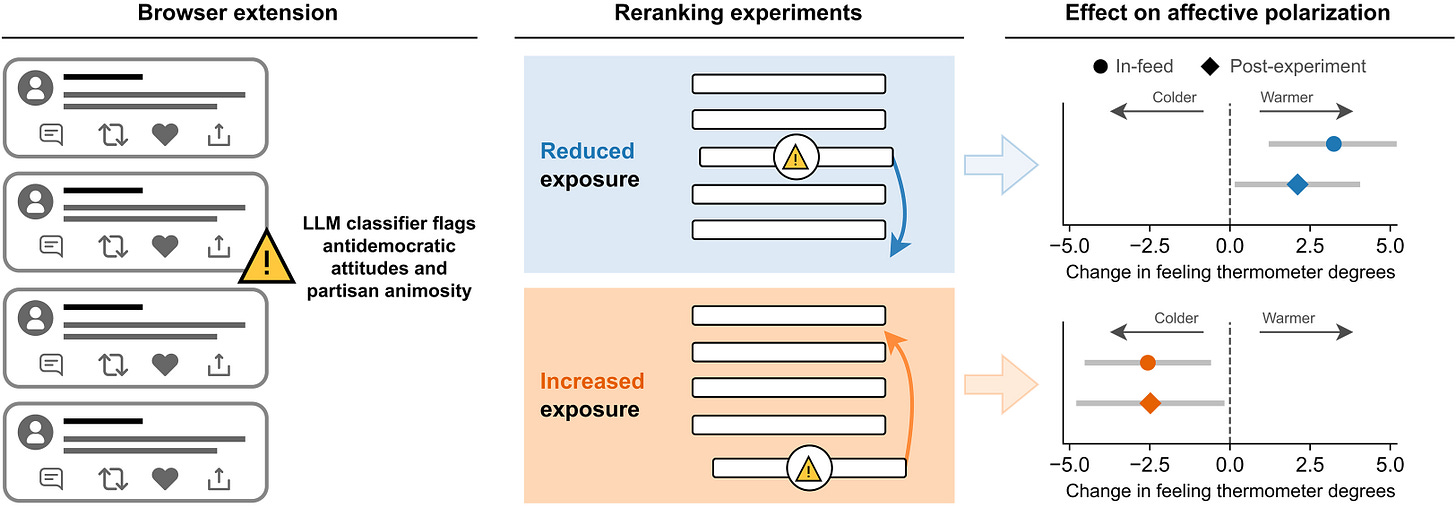

New research suggests that there may be some alternatives. A group of researchers from Stanford developed a novel method for reranking social media feeds. They found that increasing exposure to antidemocratic attitudes and partisan animosity posts in the newsfeed increases affective polarization and negative emotions, whereas decreasing exposure reduces them (see figure below). These changes were comparable in size to 3 years of change in United States affective polarization.

As political polarization and societal division become increasingly linked to social media activity, these findings provide a potential pathway for platforms to address these challenges through algorithmic interventions. These interventions may result in algorithms that not only reduce partisan animosity but also promote greater social trust and healthier democratic discourse across party lines.

It’s unlikely that everyone would be willing to take a four week vacation from Facebook. But this research suggests that changes to algorithmic feeds can mitigate some of the harms of social media. There is a growing awareness that people need to take this research more seriously and think about the potential costs to society given the impact of Facebook, X, WhatsApp, and other social media platforms.

The problem with social media platforms is that many of their design features and algorithms are optimized for engagement, rather than accuracy, cooperation, or other civic values—including the values that promote a healthy democracy. It is increasingly clear that denying the body of internal and external research about these issues is simply no longer an option for Meta or the rest of us.

Catch up on the last one…

If you missed our live event last month, read on to hear more about our advance screening to the AI Doc: Or How I Became an Apocaloptimist!

Just FYI, the Marumbo people sued the NYT over that coverage and strongly disagree with the NYT and other outlets' characterization of their situation, which you're echoing in a couple of paragraphs here. See: https://apnews.com/article/marubo-tribe-amazon-new-york-times-9f989ebd87c9cf4d99d9b8b4d4c26c07.

I admire how you guys apply your psychological knowledge. I recognize what you are drawing from.